What are “models of reality”? (Part 6)

(This post is part of a series on working with data from start to finish.)

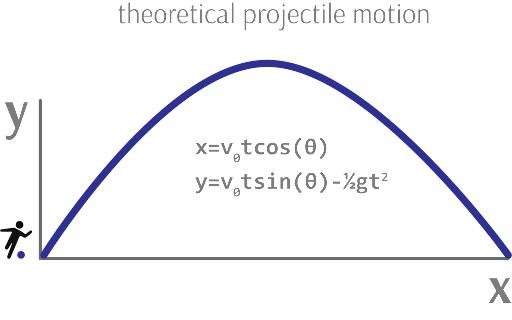

Data remains inert until it is integrated into a model of reality - that is, a description of how different parts of a system interact with one another. While data describes the parts of a system, models describe the relationships between those parts. Data alone has no goal, no direction, no “variable of interest”.

Models, on the other hand, investigate causal relationships between parts of a system: if I change this part, what happens to that part? How does the system flow? What are its inputs and outputs, its start and end? Models systematize the relationships between these components.

As with ideal data, ideal models also maximize three distinct properties:

- Accuracy

- Parsimony

- Scalability

Models should accurately explain the phenomena we observe in the real world, should not be needlessly complex, and should generalize to a broad variety of situations (e.g. time scales, physical scales, perspectives).

For example, Newton’s law of universal gravitation consistently predicts the motion of bodies we observe with extraordinarily little error (accuracy), consists of just four terms (parsimony), and applies to every physical scale above the quantum one (scalability). Similarly, an ideal software program does not produce unexplainable bugs, is easy to understand, and can be generalized to many situations due to its modular nature. Finally, the mathematical theory of fractals reduces complex phenomena to simple algorithms and produces little to no unexplained variance no matter the scale.

As we will see, the pursuit of scale - the nexus of accuracy and parsimony - drives much of scientific, commercial and even artistic inquiry, evoking notions of insight, efficiency and beauty.