(This post is part of a series on working with data from start to finish.)

At last, we’ve reached the nucleus of the data infrastructure: the data warehouse. Like freight arriving at its final destination, raw data from around the company is shipped here to the data warehouse for central processing.

The data warehouse is the “single source of truth” (SSoT) at the firm. While various enterprise systems may be “systems of record” (SoRs), serving as exclusive entry points for new data and therefore sources of truth in their own right, only the data warehouse is responsible for providing accuracy guarantees across all data at the firm.

For example, a company may maintain information about its customers in both a customer relationship management (CRM) like Salesforce, as well as an enterprise marketing software such as HubSpot. It may subsequently ingest both sets of customer data into its data warehouse, for example AWS Redshift.

Here, the company would designate only one system, such as Salesforce, as their system of record for customer information. If an employee needed to update a certain customer’s name or email, they would do so in Salesforce. Any updates to customer information within HubSpot would either be automatically denied, or understood to be secondary relative to Salesforce data.

When customer information is queried within AWS Redshift, it is Salesforce data which is first and foremost exposed. A data warehouse therefore always makes explicit or implicit decisions about which source data systems are systems of record. Data within the data warehouse should always reconcile exactly with data in the system of record. If it does not, the data warehouse is in error. The data warehouse may freely fail to reconcile with non-systems of record, as they do not contain authoritative source data.

Because the data warehouse makes explicit guarantees about the quality of its data - either collected as-is from source systems or derived from a collection of source systems - it serves as the semantic backbone of an organization. It determines which systems are systems of record, adjudicates definitions between various systems, and singularly applies validation and transformation to raw data that need not be replicated across all source systems. Only the data warehouse can provide a unified, internally consistent and synoptic view of all data at the firm.

The objective of a data warehouse, like a well-run factory, is to transmute raw materials into processed goods - that is, raw data must become well-defined, functional data assets. This is achieved through three broad mandates:

- Evaluating and selecting data infrastructure tools

- Modeling and transforming data

- Administering the data warehouse

We’ll begin data infrastructure tooling, and how in particular the field has evolved over the past 50 years.

The 1970s: The emergence of the relational database

In 1970, computer scientist E. F. Codd published a seminal, 10-page paper titled “A Relational Model of Data for Large Shared Data Banks”, wherein he proposed a relational model of data and an associated “universal data sublanguage”.

At the time, data was most commonly arranged using a hierarchical model, which can still be seen today in the layout of file systems, in file formats like XML and JSON, and in HTML’s Document Object Model (DOM). Although hierarchical models reflected an intuitive way of conceptualizing data - a “person” sits within a “team” within a larger “organization” - it came with certain drawbacks.

The first was that it repeated data in multiple places and, over time, this produced data inconsistencies. We can examine this by way of the popular JSON format. Imagine we have the following data:

data = [

{

"organization":{

"name":"Acme Corporation",

"teams":[

{

"name":"Finance",

"people":[

"Steven Smith",

"Jane Doe",

"Sarah Connors",

"Evan Middleton"

]

},

{

"name":"Marketing",

"people":[

"Steven Smith",

"Kaitlyn Wood",

"Jack Feinwood",

"Steven Lanyard"

]

},

{

"name":"Engineering",

"people":[

"John Finch",

"Angela Vickers",

"Sally Beckhert",

"Robert Samueslon"

]

},

{

"name":"Human Resources",

"people":[

"Steven Vale",

"Zachary Seaward",

"Sam Slate",

"Walter Iverson"

]

}

]

}

}

]

Notice how Steven Smith appears under both the Finance and Marketing teams. If Steven leaves the firm, now we have to ensure he is removed in two locations. Should we erroneously remove him from only one team, the data will be internally inconsistent and by extension inaccurate.

The second issue relates to access paths. Imagine we want to count how many employees we have at the firm. We might write JavaScript code that looks like this:

[... new Set(data.filter(obj => obj.organization.name === 'Acme Corporation')[0].organization.teams.flatMap(obj => obj.people))].length

This says: give me the organization whose name is “Acme Corporation”, look within their associated teams for people and count the distinct number of names (here, 15 in total).

Notice how, to answer this question, we must follow a particular path, typically called a “query access pattern”. This path represents the logical hierarchy of entities. To find what you are looking for, you must always start at the top of the hierarchy (or root of the tree) and navigate to the bottom.

Despite us only needing a list of people at the firm, we must unnecessarily route through unrelated “nodes” of the hierarchy - such as the teams people sit within - to get what we’re looking for. When scaling up to large data sizes, scanning billions or trillions of records per query, such inefficiencies invariably become slow and expensive.

Codd, in his 1970 paper, invented a system for organizing data which was effectively “access pattern free”. It would rely on free-floating “relations” (more commonly known as database tables) which specified relationships to one another. There would be no hierarchy and no tree, only a flat landscape of tables and their associations. It would become known as the relational model of data.

Codd’s formulation was based entirely on relational algebra, which meant all data operations could be represented mathematically using predicate logic. If you passed a collection of input relations (tables) through a handful of well-known set functions, such as set union or set difference, then you could guarantee the output relations (result sets), no matter how they were computationally arrived at.

In other words, if you started with two data sets and wanted the intersection of values between them, you knew what the result would be, even if you didn’t exactly know how you’d compute it. One such algorithm would be iterating through each value in the first set, checking if it existed in the second set, and only if so placing it into a third “intersection” result set. Other algorithms include binary search, merging search and fast set intersection.

Some implementations would be faster or slower, but thanks to relational algebra, we were always assured of what the output would be. This effectively cleaved two layers into the design of database systems: the first, which specified mathematically what we wanted, and the second, which actually implemented it.

The former became the “data sublanguage” that Codd had originally envisioned: SEQUEL (later called SQL), invented by Codd’s IBM colleagues Donald Chamberlin and Raymond Boyce in 1973.

The latter became the “query engine”, the workhorse at the center of the database, which parsed incoming SQL statements and assigned the most efficient “query plan” to execute them. Whether the query engine ordered data using a merge sort or quick sort or heap sort was entirely obscured to the end user. Propelled from beneath by the query engine, database users could write SQL joins, filters and aggregations - all enabled by the guarantees of relational algebra - without ever having to worry about how exactly to implement them.

The 1980s: Relational databases take over, but not over everything

By the late 1970s, Codd’s ideas had permeated beyond the walls of IBM and began to see wider acceptance in the broader technology community. A then-unknown software engineer recognized the potential of Codd’s work and released the first commercial version of the relational database management system (RDBMS) in 1979.

That software engineer was Larry Ellison, who went on to found the technology titan Oracle, a $100B+ enterprise which today houses the data of many of the largest firms and governments in the world. This would not be the first time that lucrative technology had escaped the clutches of technology incumbent IBM. Just years prior, IBM outsourced development of the PC operating system to a fledgling company named Microsoft, which would soon vault into becoming one of the world’s most valuable technology companies.

By the late 1980s, relational databases had thoroughly embedded themselves into the circulatory system of every major enterprise and government. Airlines, banks, hospitals and municipalities all eagerly threw their data into relational databases. The moment you had software automating business processes, you now also had a relational database behind the scenes storing and manipulating the data.

As more and more organizations piled their data into relational databases, a new problem emerged. Early databases were designed to rapidly store and retrieve individual records, but they were not necessarily designed for large-scale analytics. If an airline wanted to know which flight routes featured the highest profit margins over the past five years, they would likely run into at least three issues.

The first was that they would have to join many, many tables together. Early databases were designed for “online transactional processing” (OLTP), which prioritized inserting, updating and deleting data over and above reading data. To do so quickly, the structure of the data, or “schema”, needed to be as federated as possible.

Every logical entity - a passenger, a ticket, a flight, a booking - needed to be its own table. This sprawling data model, the pinnacle of which was Codd’s “third normal form” (3NF), ensured data consistency when calculating results, but also made analytical work more complex.

The second issue was that there was often no singular database which contained all the information required to perform certain analytical queries. An airline might keep flight information in its flight management system, booking information in its ticket management system, and financial information in its accounting system. Each of these were backed by separate relational databases.

Finally, large analytical queries were computationally expensive, and burdening operational databases with such queries routinely caused the databases to crash. These crashes would subsequently cascade into a failure of the entire production system.

To address these issues, database designers opted to create a secondary, read-only “data warehouse” whose exclusive use would be analytical in nature. It would be a relational database, just like the OLTP databases, but would be isolated from production systems and would consolidate all information between disparate databases. It would replicate data byte-for-byte from operational systems via ETL processes and feature a “denormalized” schema to reduce the amount of joins required to query data.

This database, designed first and foremost for “online analytical processing” (OLAP), would herald a new era in large-scale analytics: that of data warehousing.

The 1990s: From databases to data warehouses

By the early 1990s, most large enterprises utilized a data warehouse to support complex analytical work. At the same time, they found their business users increasingly using spreadsheets to perform small-scale data analysis. The first popular spreadsheet program was Lotus 1-2-3, released in 1983, which was soon eclipsed by Microsoft Excel, which launched in 1985.

With the aid of spreadsheets, business users could analyze data via a simple, convenient, graphical user interface (GUI) instead of the more abstruse command-line interface (CLI) provided by relational databases. They could perform the hallmark of data analysis - filtering, pivoting and aggregating - all by point-and-click rather than SQL commands.

However, they could only do it for “small data” - that is, the amount of data which fit into the spreadsheet. For Excel, that was 1,048,576 rows. Data analysis therefore forked into two paths: the one where you had small enough data which fit into Excel, and the other where you didn’t and needed to perform analysis directly within the data warehouse.

When it came to the data warehouse, there was no clear consensus on how exactly to structure the data. We knew it should be less federated (or “normalized”) than the OLTP database, but how much less?

In 1992, Bill Inmon published Building the Data Warehouse, where he argued that the data warehouse should be as normalized as the OLTP database, only differing insofar as it contained all firm-wide data and not just the data for a given application. In Ralph Kimball’s 1996 The Data Warehouse Toolkit, Kimball instead suggested the data warehouse should follow a more denormalized structure to simplify analysis for business users.

Kimball’s methodology, which he called “dimensional modeling”, revolved around central “fact tables” surrounded by supporting “dimension tables.” Fact tables aggregated raw data into quantifiable measures, such as the number of users visiting a website per day, whereas dimension tables allowed users to pivot those facts by various dimensions, such as “by region” or “by marketing channel”.

Using two simple techniques - aggregations and filters applied to dimensions, and calculations to facts - business users had everything they needed to analyze data at scale rapidly and comprehensively.

Despite their differences, both Inmon’s and Kimball’s architectures remain widely used today, and in fact are often used in parallel. It is Inmon’s approach which is used at the initial stages of data processing (called “base” or “staging” areas), while it is Kimball’s which is used for the final stages (as fact and dimension tables are molded into business-consumable “data marts”).

By the late 1990s, there remained one final frontier which lay beyond the reach of conventional OLAP data warehouses: “big data.”

The 2000s: Big data and data lake architectures

Despite being able to facilitate large-scale analytics, OLAP data warehouses could not service truly massive data sets. To store and process big data, they needed to be “vertically scaled”, which required an upgrade of the entire physical machine, instead of “horizontally scaled”, where machines could be conjoined infinitely to form a virtual data warehouse.

A single, physical machine serving as the firm-wide data warehouse created three problems. First, it constituted a single point of failure for analytics in the event of a server crash. Second, vertical scaling after a certain point was challenging, if not impossible, as there are only so many physical slots onto which you can attach additional hard drives or RAM. Finally, calculations could not be performed in parallel across multiple processors, instead being performed sequentially within a single one.

Due to these limitations, there remained an upper limit on just how much data a single-machine data warehouse could process. This all changed with the advent of the Hadoop Distributed File System (HDFS), invented by Doug Cutting and Mike Cafarella in 2002.

Unlike traditional databases, HDFS was “distributed-first.” Rather than storing all data on a single machine, it instead parceled out data to a network of connected machines. Data would be replicated redundantly across the network to minimize the risk of permanent data loss and improve the data’s “durability”. New machines could be added to the network “infinitely”, thereby expanding the network’s collective disk space and compute power.

The hardware for these underlying machines was secondary. They could be old servers, new servers or storage servers. What mattered was the distributed software orchestrating from above: HDFS.

By 2004, HDFS had proved its mettle and began to see wider adoption. That year, Jeff Dean and Sanjay Ghemawat at Google published a paper titled “MapReduce: Simplified Data Processing on Large Clusters”, where they introduced a new software for processing data across a distributed file system such as HDFS.

MapReduce worked by “mapping” a data operation to all machines on the network, gathering the results (“shuffle and sort”), and finally “reducing” those results using some form of aggregation.

For example, if an airline wanted to calculate the number of passengers traveling on every flight route this year, it would “map” an extraction operation for passenger information to all nodes, shuffle and sort the results by flight route, and finally “reduce” the results by calculating the number of passengers for each flight route.

Although powerful, the original MapReduce program was written in Java, slow to develop in, and not particularly user-friendly. Programming interfaces soon emerged to simplify working with MapReduce, such as Spark (written in Scala), PySpark (written in Python), Hive (offering SQL semantics) and Pig (a command-line interface).

Spark and PySpark in particular would later blossom into an expansive ecosystem of libraries which, broadly speaking, applied batch ETL, data streaming and machine learning pipelines to “big data” for the very first time. In addition, the tools were entirely open-source, meaning you could assemble the entire infrastructure yourself without having to rely on any external vendors (and the attendant risk of future vendor lock-in).

Collectively, HDFS and Spark formed the foundation of what became known as “data lake” architectures. Unlike a relational database, HDFS enforced no schema and no structure upon the data launched into the file system. Further, Spark could process any type of data: unstructured, semi-structured, image, audio and so on.

As a result, the guardrails to data collection came off: if we could capture and store anything in the “infinite file system”, then why wouldn’t we? It is at this point, around 2005, that the era of “big data” began in earnest. Data lake architectures proliferated, and firms began collecting more data than ever before. HDFS and Spark were in; the OLAP database was out.

Even in the beginning however, there were objections to the new distributed data paradigm.

First, if you could throw anything into HDFS, without any structure or form, you often ended up with a “data swamp” rather than a data lake. Second, setting up the Hadoop ecosystem was no small task and often took a team of engineers to configure and manage. Third and finally, Spark was not intuitive, meaning that analysis, although directed by business stakeholders, ultimately had to be implemented by engineers.

For the latter half of the 2000s, it appeared as though this was the end state of data infrastructure. Companies were finally able to analyze big data, but only if they invested into a large team of engineers to help them do so.

The 2010s: Distributed data warehouses arrive

Beginning in the 2010s, the data landscape would fundamentally shift once again with the renaissance of the data warehouse. In 2011, Google launched BigQuery, the first vendor-managed, distributed, relational data warehouse.

Behind the scenes, BigQuery utilized Google’s own distributed file system called Colossus, and its own map-reduce system called Dremel. What was exposed to the end user, however, was a simple interface data practitioners had long been familiar with: SQL. In releasing BigQuery, Google revived the OLAP data warehouse. This time however, it would be distributed.

Amazon followed suit in 2013 with its offering called Redshift, and Snowflake publicly launched its distributed data warehouse in 2014. Now, anyone could use SQL to process and analyze big data, not just the data engineers who were able to grapple map-reduce.

Distributed data warehouses of course were not quite like traditional data warehouses. The architecture on the backend was completely different; it was only the frontend which gave the appearance of a data warehouse.

The most salient difference and raison d’être for distributed data warehouses was that they could “infinitely scale”: you could grow your data footprint and compute requirements without ever having to vertically or horizontally scale your infrastructure. Everything could be “auto-scaled”, managed entirely by the vendor in the cloud.

Because the distributed file system and the map-reduce “compute cluster” were two separate systems, you could also resize one without changing the other. Unlike vertically scaled data warehouses, where increasing your RAM was attended by increased disk space and processor power (i.e. an upgrade of the overall server), a distributed data warehouse allowed you to marry small compute with enormous data for efficiency, or large compute with small data for speed.

As a result of this decoupling, large volumes of data no longer needed to be stored in powerful but expensive, vertically-scaled servers. The data itself could be stored on cheap, commodity servers, while the more expensive map-reduce jobs could live on big servers with powerful processors. While the cost of compute continued its perennial decline (thanks to Moore’s law), the cost of storing data plummeted.

The 2020s: Managing big data pipelines using “just SQL”

By the 2020s, distributed data warehouses like Google BigQuery, AWS Redshift and Snowflake had firmly taken hold in the data infrastructure landscape. Data lake architectures, such as the cloud-managed Databricks or self-managed Hadoop, persisted, but waned in popularity. SQL had returned to usurp Spark.

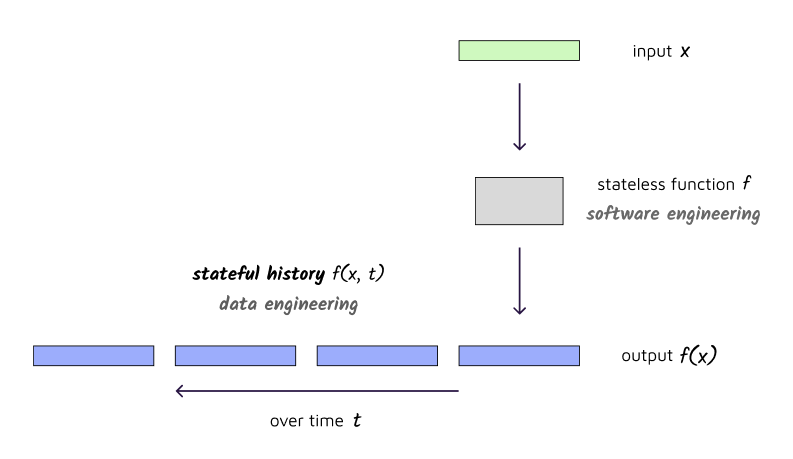

Despite its simplicity however, SQL had one critical deficit that Spark did not: SQL was not a data pipeline. While Spark could sequentially and incrementally process raw data into enriched, business-consumable data, SQL could only perform one operation at a time.

In the early days of single-machine data warehouses, SQL statements were stitched together using a patchwork of “stored procedures” and “triggers”: after this table is updated, update the next, and so on. Together, these SQL statements implicitly congealed into “dependency graph” or, more technically, a “directed acyclic graph” (DAG).

By the 1980s, this tangled web of SQL code was plucked from the database and dropped into “ETL tools”, such as Microsoft’s SSIS or Talend’s Open Studio. These tools enabled data engineers to visualize the DAG, monitor pipeline health, alert on errors, automatically retry on failures, provision hardware resources, and manage the ETL schedule.

If you didn’t use a vendor to orchestrate your data pipelines, then you typically wrote your own. The most primitive of these was a simple cron job running a bash script (“run this SQL code at 6pm daily”), while the more sophisticated ones built custom frameworks in Python or Java to construct the DAG.

In 2014, batch data processing experienced a breakthrough with the public release Airflow, a data pipeline orchestration tool, created by Maxime Beauchemin at Airbnb. Airflow had all the features of historical, vendor-based ETL tools, but also contained two important differences.

First, DAGs were not developed by point-and-click within the GUI, but instead were specified in the underlying Python code. Thanks to Airflow, DAGs for the first time became first-class concepts, meaning entire pipelines could be copied, re-arranged and nested in order to improve architectural design.

Second, Airflow was free and open source, meaning once again that companies could build their own tooling in-house without having to worry about vendor management, costs and lock-in. Over the coming years, Airflow took the data industry by storm and rapidly became the de facto solution for managing data pipelines.

Although Airflow was simpler than writing your own DAG framework or wrestling the archaic Microsoft SSIS, it was not that simple. You needed to code up the DAG in Python, understand various “operators” to execute pipeline tasks, and - like a flight controller monitoring dozens of flight paths each day - decipher the kaleidoscopic dashboard to see which jobs succeeded and which jobs failed. The data engineer was therefore still integral to constructing, managing and monitoring the data pipeline.

This changed with the launch of dbt, or “data build tool”, in 2016. Developed by the team at Fishtown Analytics, dbt offered a simple data pipeline wrapper around SQL statements, no Python required. Data analysts and engineers could write their plain SQL in an editor, test it in the data warehouse, and more or less copy-and-paste it into dbt to weave it into the data pipeline. With dbt, the entire data pipeline was just SQL.

But what did this data pipeline look like? How exactly did it transmute raw data into business-ready data? And what does it mean to produce business-ready data anyway?

That brings us to the next major topic in data warehousing: data transformation, in particular, using SQL and dbt.

]]>